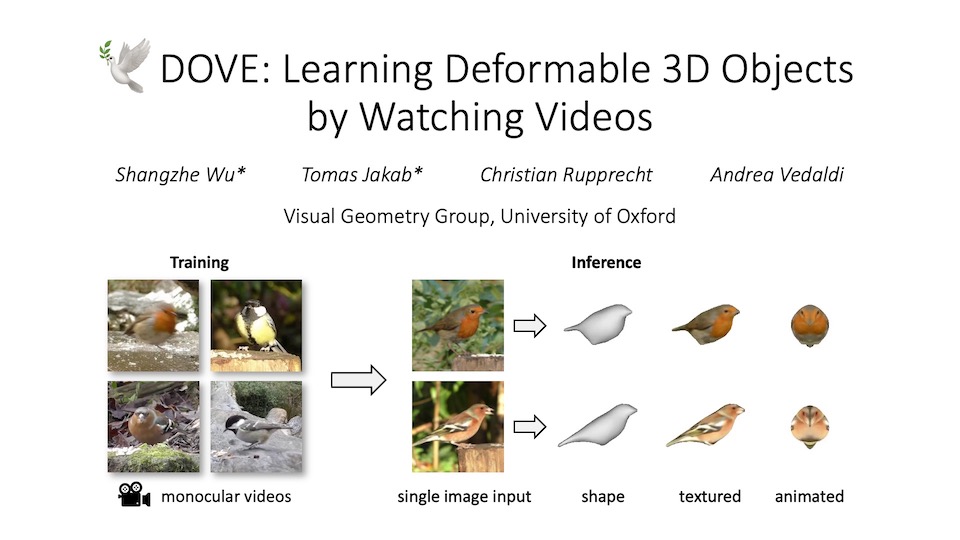

GaussianAnything: Interactive Point Cloud Latent Diffusion for 3D Generation

Yushi Lan1 Shangchen Zhou1 Zhaoyang Lyu2 Fangzhou Hong1 Shuai Yang3 Bo Dai2 Xingang Pan1 Chen Change Loy1 1 S-Lab, NTU Singapore 2 Shanghai AI Lab 3 Peking University

[Paper] [Code] [BibTeX]

While 3D content generation has advanced significantly, existing methods still face challenges with input formats, latent space design, and output representations. This paper introduces a novel 3D generation framework that addresses these challenges, offering scalable, high-quality 3D generation with an interactive Point Cloud-structured Latent space. Our framework employs a Variational Autoencoder (VAE) with multi-view posed RGB-D(epth)-N(ormal) renderings as input, using a unique latent space design that preserves 3D shape information, and incorporates a cascaded latent diffusion model for improved shape-texture disentanglement. The proposed method, GaussianAnything, supports multi-modal conditional 3D generation, allowing for point cloud, caption, and single/multi-view image inputs. Notably, the newly proposed latent space naturally enables geometry-texture disentanglement, thus allowing 3D-aware editing. Experimental results demonstrate the effectiveness of our approach on multiple datasets, outperforming existing methods in both text- and image-conditioned 3D generation.

Could internal representations from text-to-image diffusion models contribute to processing multiple, diverse images? We delve into the application of Stable Diffusion (SD) features for high-qualitysemantic and dense correspondence. Remarkably, our findings indicate that with straightforward post-processing, SD features can compete on a similar quantitative level as State-of-the-Art representations.