Some say AI will make war more humane. Israel’s war in Gaza shows the opposite.

Israel has reportedly been using AI to guide its war in Gaza — and treating its decisions almost as gospel. In fact, one of the AI systems being used is literally called “The Gospel.”

According to a major investigation published last month by the Israeli outlet +972 Magazine, Israel has been relying on AI to decide whom to target for killing, with humans playing an alarmingly small role in the decision-making, especially in the early stages of the war. The investigation, which builds on a previous exposé by the same outlet, describes three AI systems working in concert.

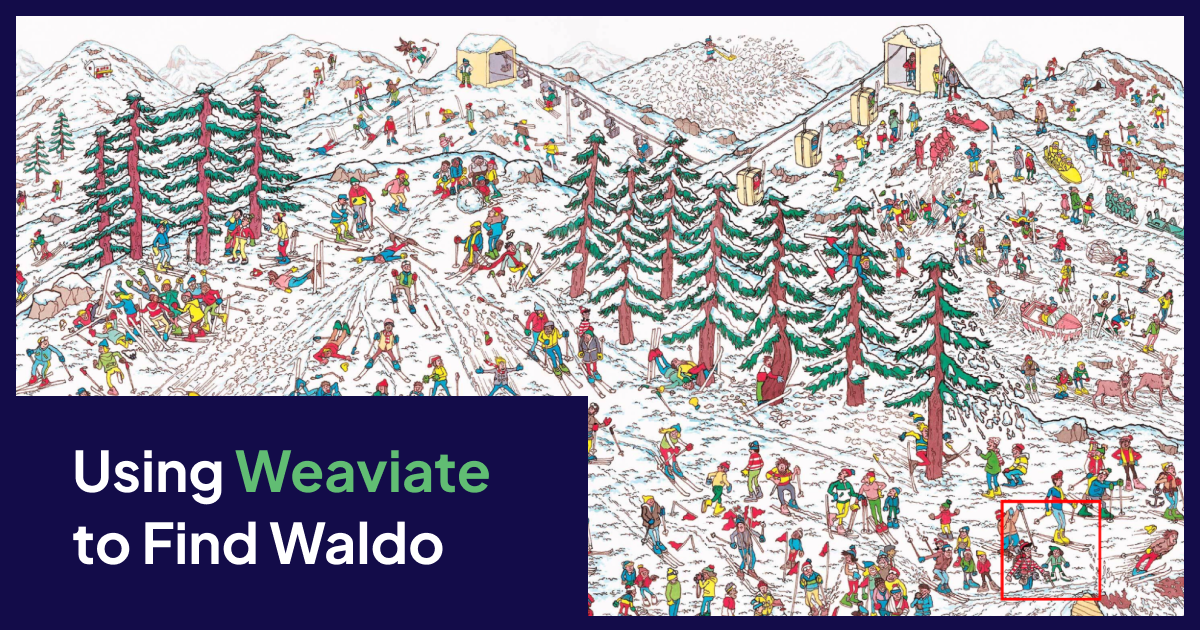

“Gospel” marks buildings that it says Hamas militants are using. “Lavender,” which is trained on data about known militants, then trawls through surveillance data about almost everyone in Gaza — from photos to phone contacts — to rate each person’s likelihood of being a militant. It puts those who get a higher rating on a kill list. And “Where’s Daddy?” tracks these targets and tells the army when they’re in their family homes, an Israeli intelligence officer told +972, because it’s easier to bomb them there than in a protected military building.

The result? According to the Israeli intelligence officers interviewed by +972, some 37,000 Palestinians were marked for assassination, and thousands of women and children have been killed as collateral damage because of AI-generated decisions. As +972 wrote, “Lavender has played a central role in the unprecedented bombing of Palestinians,” which began soon after Hamas’s deadly attacks on Israeli civilians on October 7.