OpenScholar: The open-source A.I. that’s outperforming GPT-4o in scientific research

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More

Scientists are drowning in data. With millions of research papers published every year, even the most dedicated experts struggle to stay updated on the latest findings in their fields.

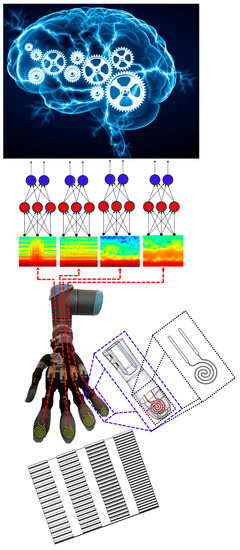

A new artificial intelligence system, called OpenScholar, is promising to rewrite the rules for how researchers access, evaluate, and synthesize scientific literature. Built by the Allen Institute for AI (Ai2) and the University of Washington, OpenScholar combines cutting-edge retrieval systems with a fine-tuned language model to deliver citation-backed, comprehensive answers to complex research questions.

“Scientific progress depends on researchers’ ability to synthesize the growing body of literature,” the OpenScholar researchers wrote in their paper. But that ability is increasingly constrained by the sheer volume of information. OpenScholar, they argue, offers a path forward—one that not only helps researchers navigate the deluge of papers but also challenges the dominance of proprietary AI systems like OpenAI’s GPT-4o.

At OpenScholar’s core is a retrieval-augmented language model that taps into a datastore of more than 45 million open-access academic papers. When a researcher asks a question, OpenScholar doesn’t merely generate a response from pre-trained knowledge, as models like GPT-4o often do. Instead, it actively retrieves relevant papers, synthesizes their findings, and generates an answer grounded in those sources.