Researchers demonstrate that malware can be hidden inside AI models

Researchers Zhi Wang, Chaoge Liu, and Xiang Cui published a paper last Monday demonstrating a new technique for slipping malware past automated detection tools—in this case, by hiding it inside a neural network.

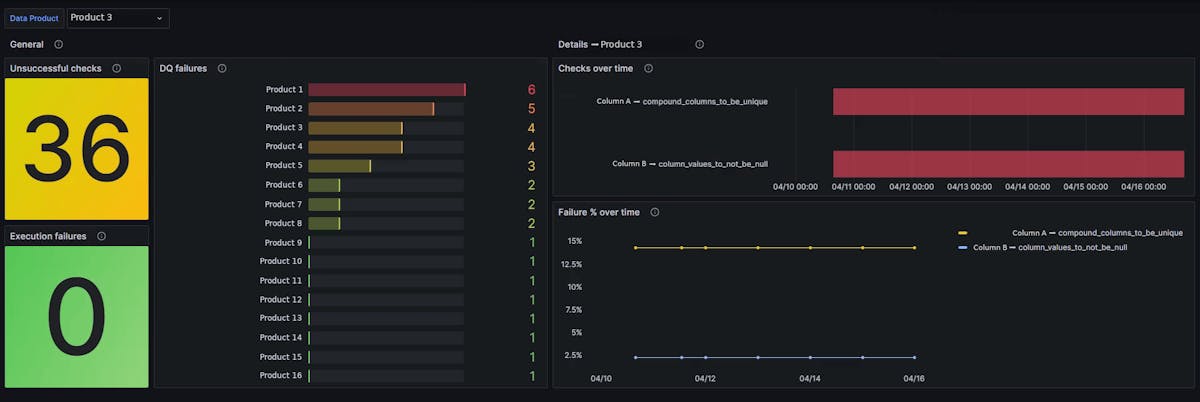

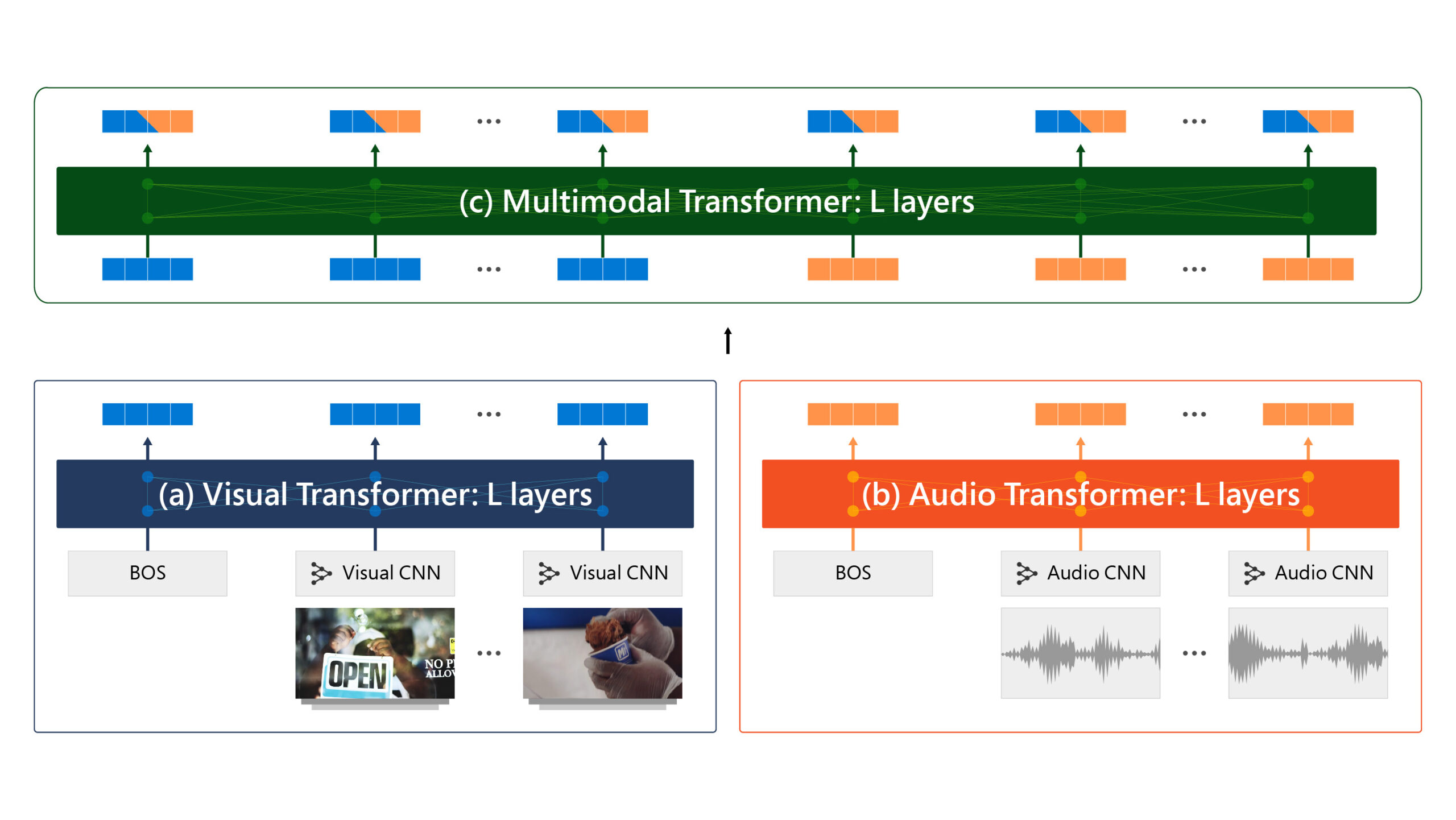

The three embedded 36.9MiB of malware into a 178MiB AlexNet model without significantly altering the function of the model itself. The malware-embedded model classified images with near-identical accuracy, within 1% of the malware-free model. (This is possible because the number of layers and total neurons in a convolutional neural network is fixed prior to training—which means that, much like in human brains, many of the neurons in a trained model end up being either largely or entirely dormant.)

Just as importantly, squirreling the malware away into the model broke it up in ways that prevented detection by standard antivirus engines. VirusTotal, a service that "inspects items with over 70 antivirus scanners and URL/domain blocklisting services, in addition to a myriad of tools to extract signals from the studied content," did not raise any suspicions about the malware-embedded model.

The researchers' technique chooses the best layer to work with in an already-trained model and then embeds the malware into that layer. In an existing trained model—for example, a widely available image classifier—there may be an undesirably large impact on accuracy due to not having enough dormant or mostly dormant neurons.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25414869/IMG_0523.jpg)