0xabad1dea / copilot-risk-assessment.md

GitHub was kind enough to grant me swift access to the Copilot test phase despite me @'ing them several hundred times about ICE. I would like to examine it not in terms of productivity, but security. How risky is it to allow an AI to write some or all of your code?

Ultimately, a human being must take responsibility for every line of code that is committed. AI should not be used for "responsibility washing." However, Copilot is a tool, and workers need their tools to be reliable. A carpenter doesn't have to worry about her hammer suddenly turning evil and creating structural weaknesses in a building. Programmers should be able to use their tools with confidence, without worrying about proverbial foot-guns.

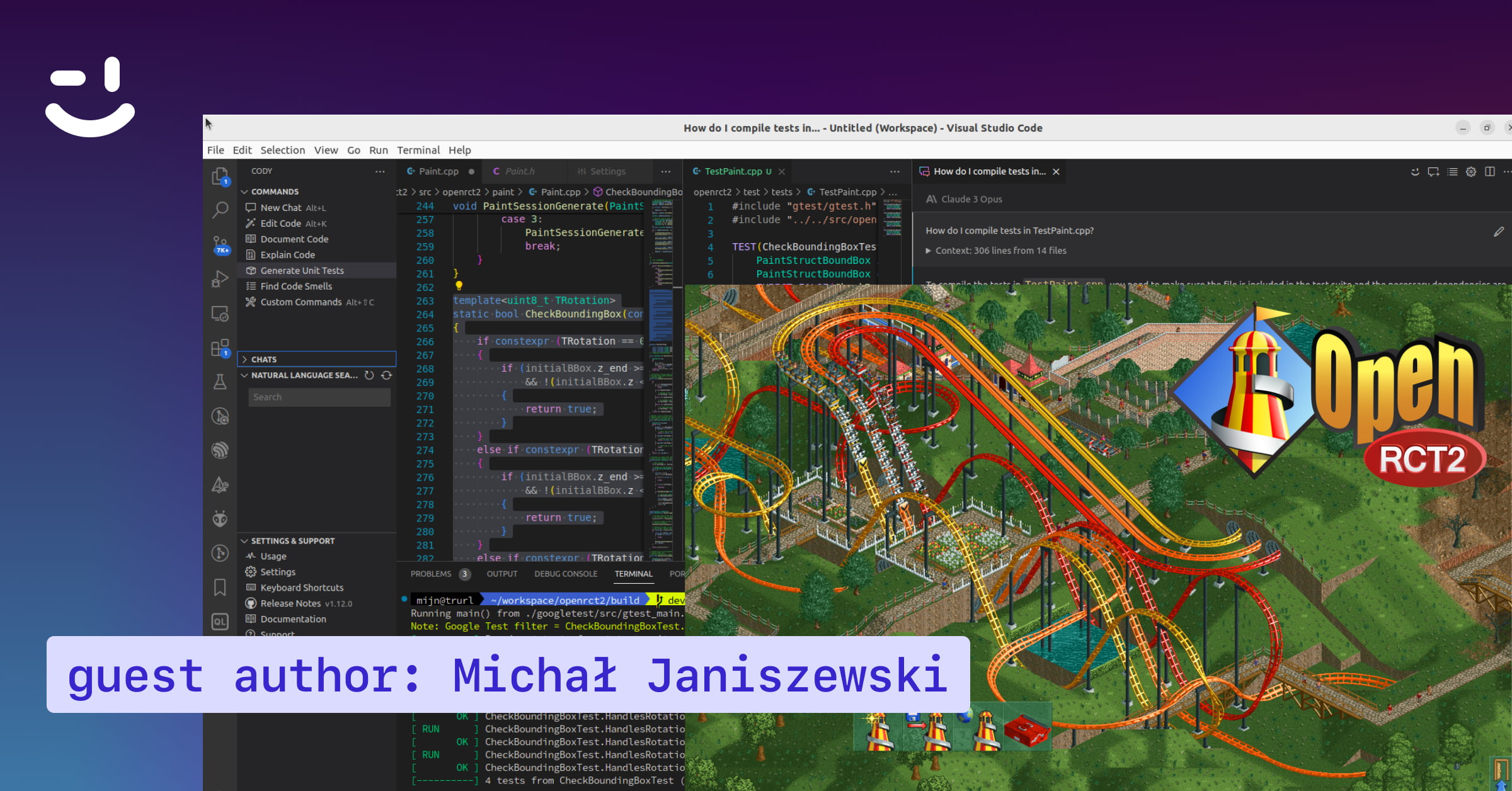

A follower of mine on Twitter joked that they can't wait to allow Copilot to write a function to validate JSON web tokens so they can commit the code without even looking. I prompted Copilot accordingly and the result was truly comedic:

This is the worst possible implementation short of also deleting your hard drive, but it's so obviously, trivially wrong that no professional programmer could think otherwise. I am more interested in whether Copilot generates bad code that looks reasonable at first glance, something that might slip by a programmer in a hurry, or seem correct to a less experienced coder. (Spoiler: yes.)