Intuiting Adversarial Examples in Neural Networks via a Simple Computational Experiment

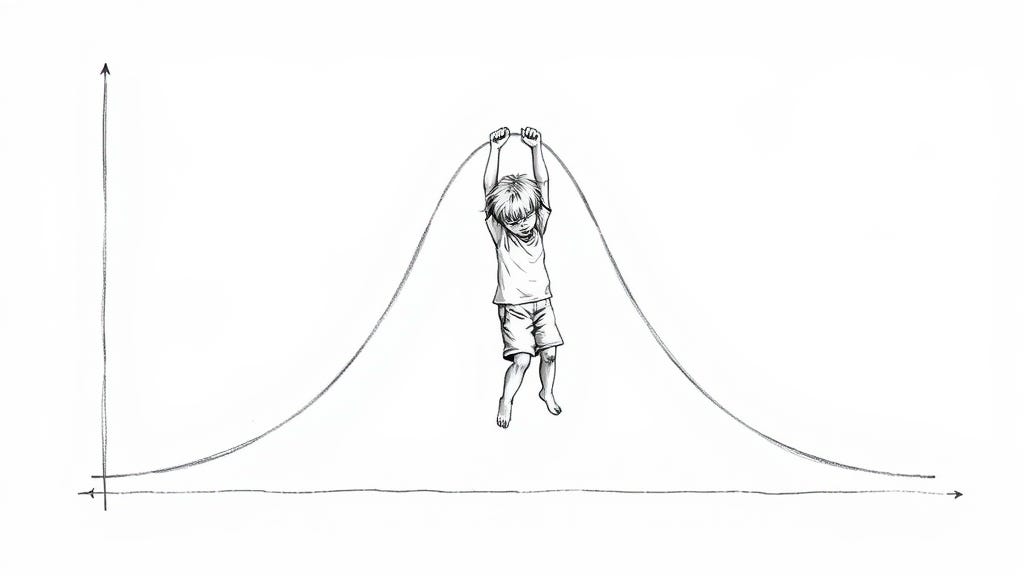

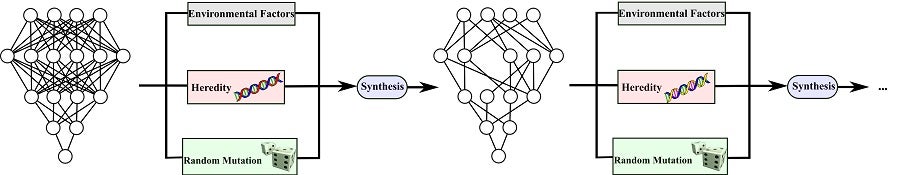

The network becomes book-smart in a particular area but not street-smart in general. The training procedure is like a series of exams on material within a tiny subject area (your data subspace). The network refines its knowledge in the subject area to maximize its performance on those exams, but it doesn't refine its knowledge outside that subject area. And that leaves it gullible to adversarial examples using inputs outside the subject area.

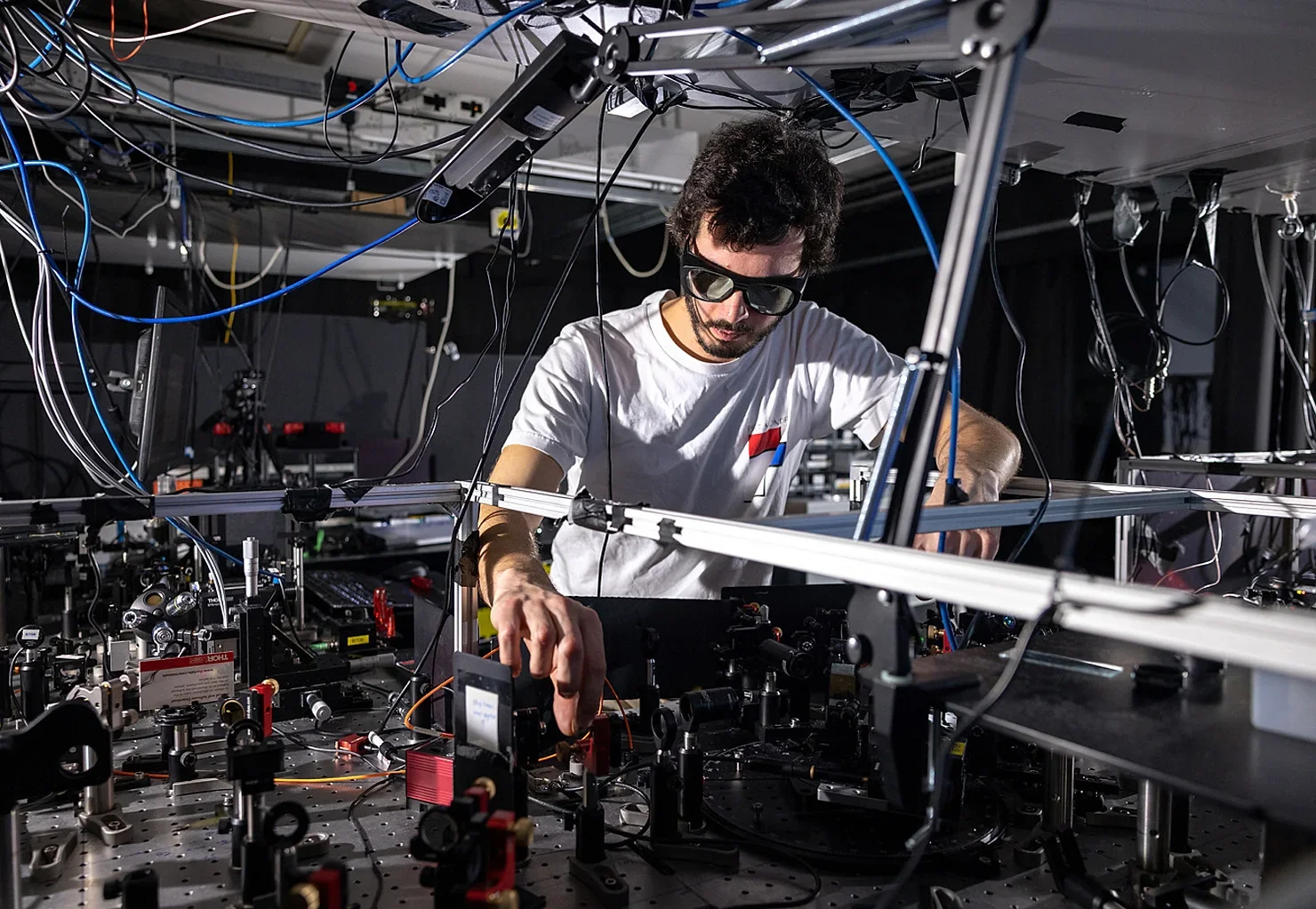

Some years ago, I did a simple experiment on a neural network that really helped me understand why adversarial examples are possible.

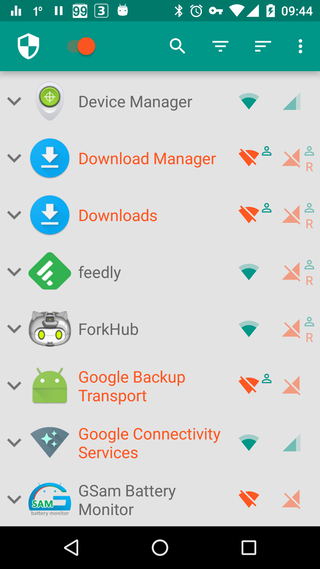

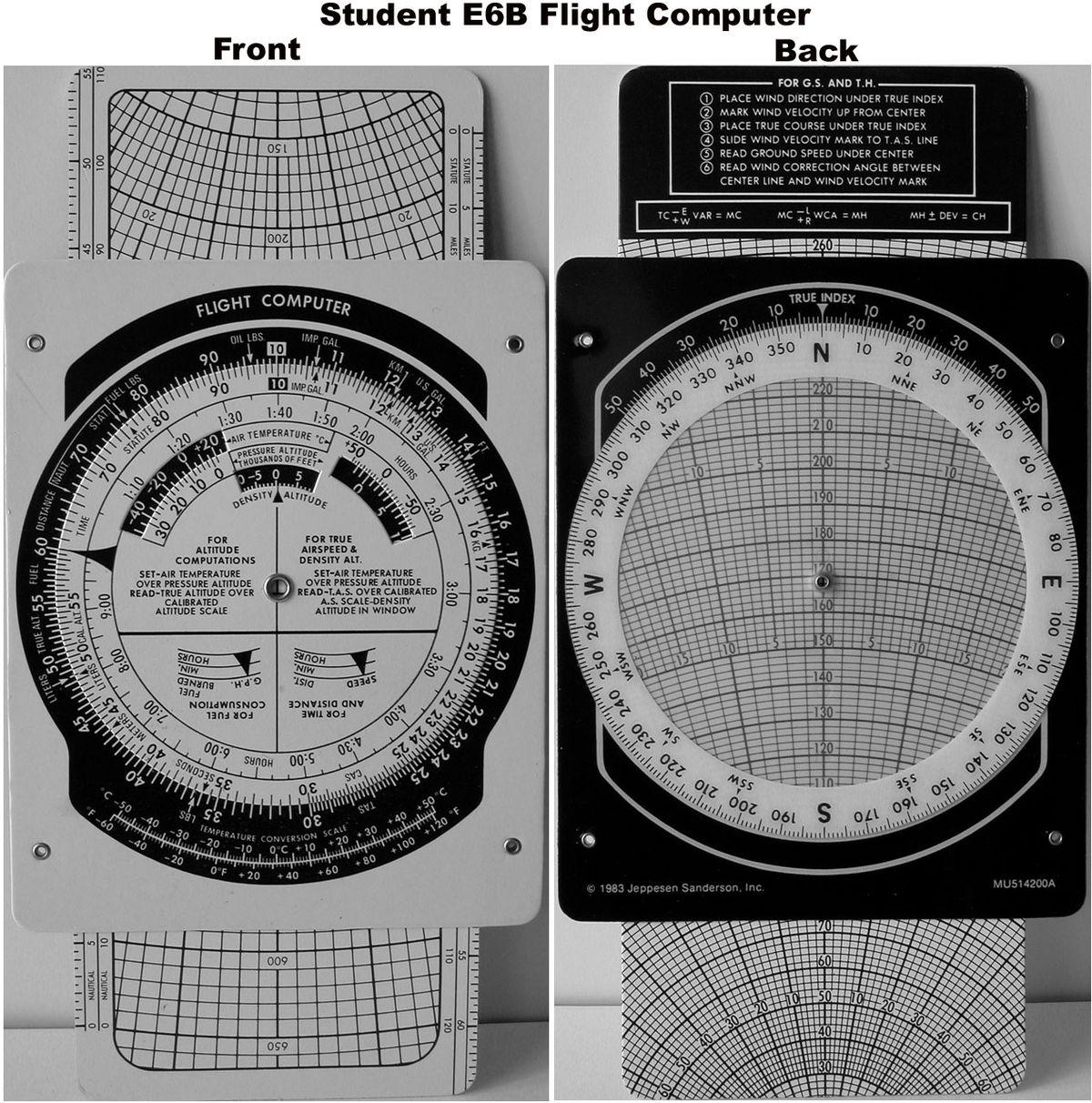

The experiment involved training a fully-connected several-layer neural network (not convolutional) on the MNIST dataset of handwritten digits.

After training, I noticed that each input image activated only a small number of neurons in the network. For any given input image, there would be something like 3 highly-activated neurons, and 10 mildly-activated neurons, and the rest of the neurons would remain inactive. Each image’s representation within the network was distributed over a small number of neurons.

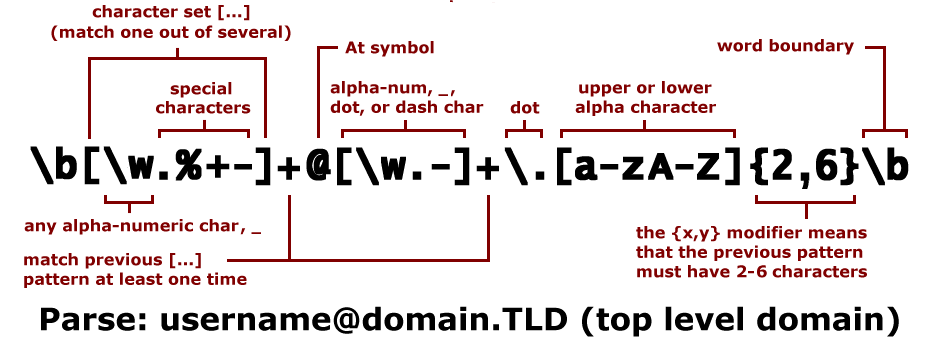

This led me to wonder what each neuron “does” in the network. Given any particular neuron, what does it detect? More precisely, what kind of features is it most attentive to in an input image?

/cdn.vox-cdn.com/uploads/chorus_asset/file/23951575/VRG_Illo_STK192_L_Normand_LinaKhan_Neutral.jpg)