MIT takes down 80 Million Tiny Images data set due to racist and offensive content

Creators of the 80 Million Tiny Images data set from MIT and NYU took the collection offline this week, apologized, and asked other researchers to refrain from using the data set and delete any existing copies. The news was shared Monday in a letter by MIT professors Bill Freeman and Antonio Torralba and NYU professor Rob Fergus published on the MIT CSAIL website.

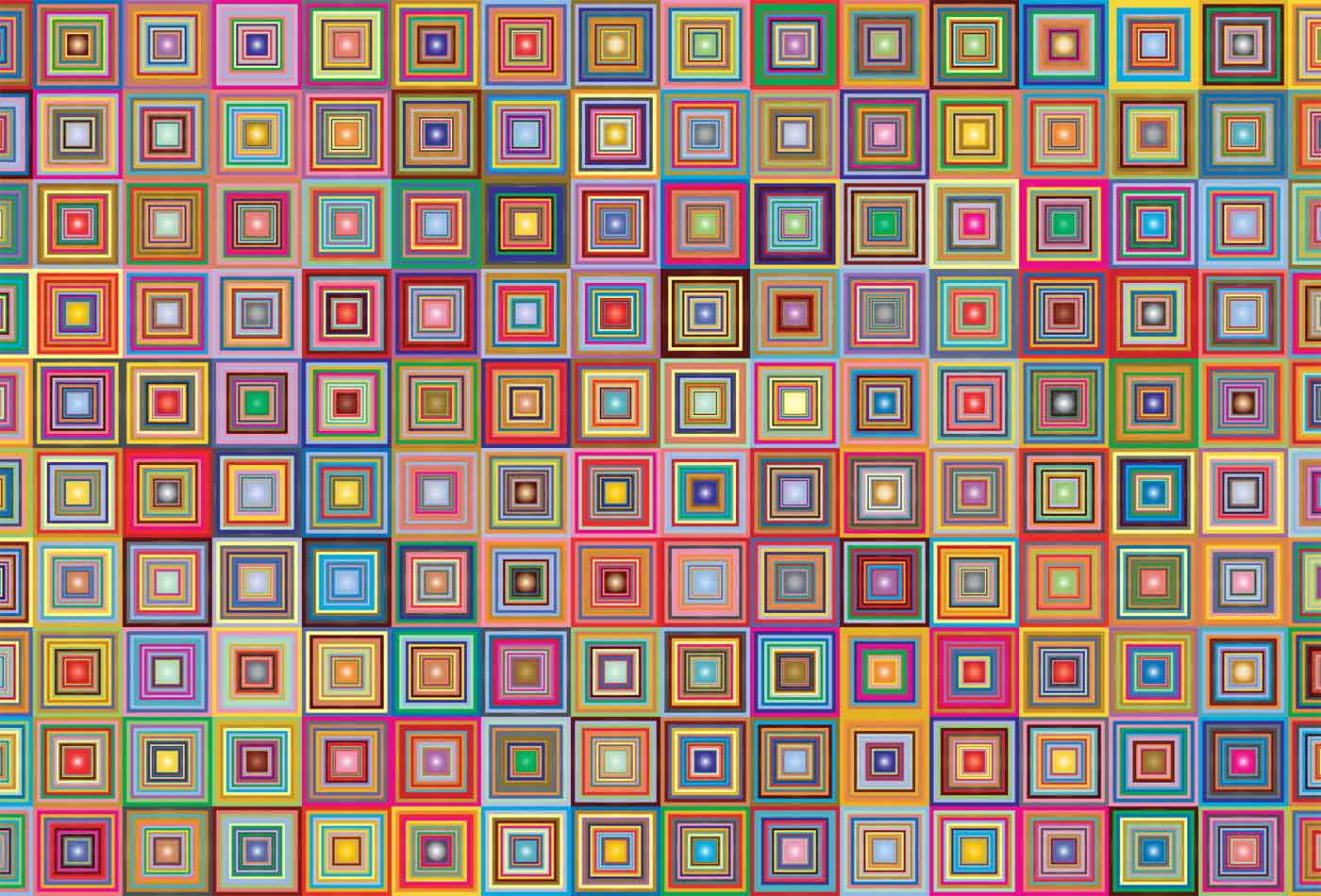

Introduced in 2006 and containing photos scraped from internet search engines, 80 Million Tiny Images was recently found to contain a range of racist, sexist, and otherwise offensive labels such as nearly 2,000 images labeled with the N-word, and labels like “rape suspect” and “child molester.” The data set also contained pornographic content like non-consensual photos taken up women’s skirts. Creators of the 79.3 million-image data set said it was too large and its 32 x 32 images too small, making visual inspection of the data set’s complete contents difficult. According to Google Scholar, 80 Million Tiny Images has been cited more 1,700 times.

“Biases, offensive and prejudicial images, and derogatory terminology alienates an important part of our community — precisely those that we are making efforts to include,” the professors wrote in a joint letter. “It also contributes to harmful biases in AI systems trained on such data. Additionally, the presence of such prejudicial images hurts efforts to foster a culture of inclusivity in the computer vision community. This is extremely unfortunate and runs counter to the values that we strive to uphold.”