Cutting-edge Chinese “reasoning” model rivals OpenAI o1—and it’s free to download

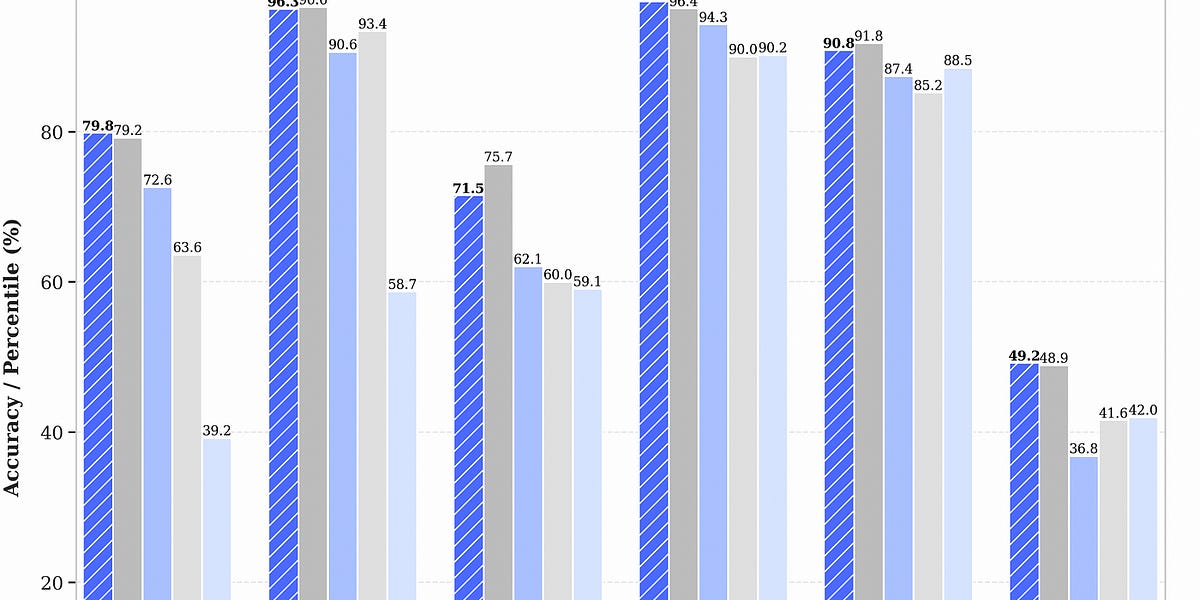

On Monday, Chinese AI lab DeepSeek released its new R1 model family under an open MIT license, with its largest version containing 671 billion parameters. The company claims the model performs at levels comparable to OpenAI's o1 simulated reasoning (SR) model on several math and coding benchmarks.

Alongside the release of the main DeepSeek-R1-Zero and DeepSeek-R1 models, DeepSeek published six smaller "DeepSeek-R1-Distill" versions ranging from 1.5 billion to 70 billion parameters. These distilled models are based on existing open source architectures like Qwen and Llama, trained using data generated from the full R1 model. The smallest version can run on a laptop, while the full model requires far more substantial computing resources.

The releases immediately caught the attention of the AI community because most existing open-weights models—which can often be run and fine-tuned on local hardware—have lagged behind proprietary models like OpenAI's o1 in so-called reasoning benchmarks. Having these capabilities available in an MIT-licensed model that anyone can study, modify, or use commercially potentially marks a shift in what's possible with publicly available AI models.

"They are SO much fun to run, watching them think is hilarious," independent AI researcher Simon Willison told Ars in a text message. Willison tested one of the smaller models and described his experience in a post on his blog: "Each response starts with a <think>...</think> pseudo-XML tag containing the chain of thought used to help generate the response," noting that even for simple prompts, the model produces extensive internal reasoning before output.

.jpg?cache-buster=2024-11-15T15:11:00.384Z)