Sohu AI chip claimed to run models 20x faster and cheaper than Nvidia H100 GPUs

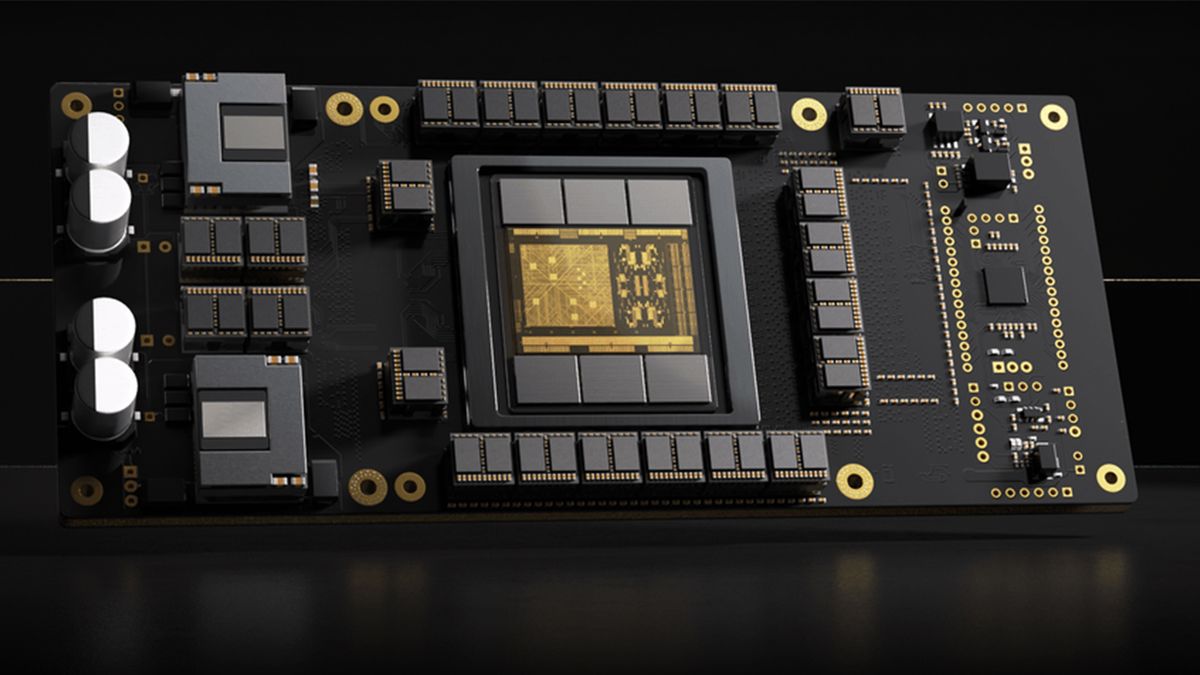

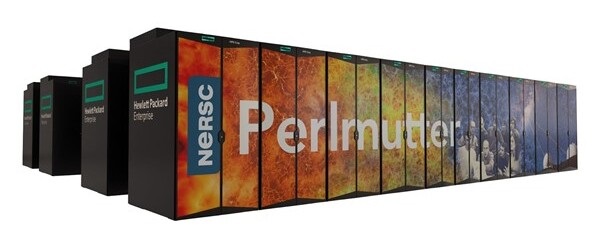

Etched, a startup that builds transformer-focused chips, just announced Sohu, an application-specific integrated circuit (ASIC) that claims to beat Nvidia’s H100 in terms of AI LLM inference. A single 8xSohu server is said to equal the performance of 160 H100 GPUs, meaning data processing centers can save both on initial and operational costs if the Sohu meets expectations.

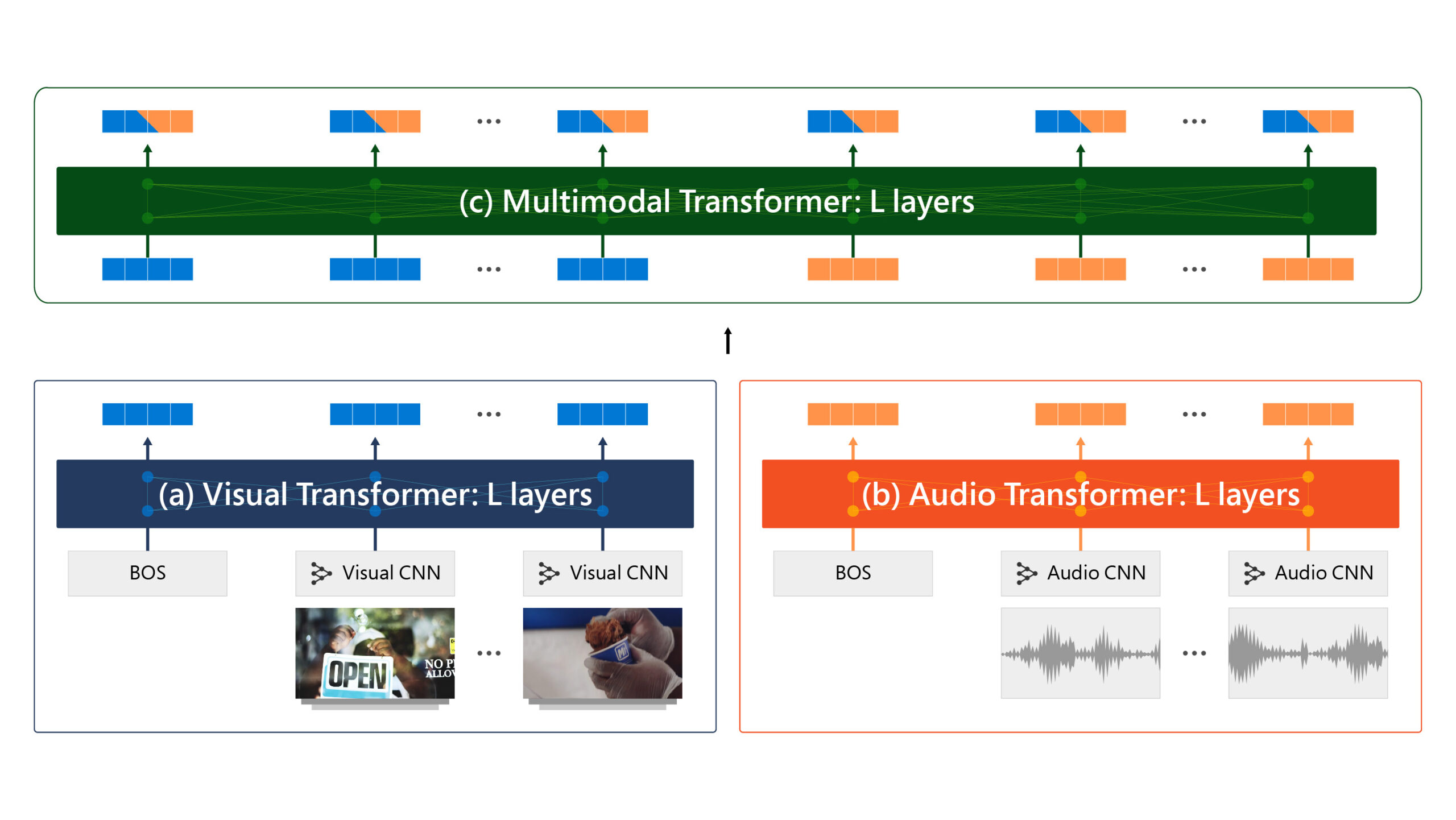

According to the company, current AI accelerators, whether CPUs or GPUs, are designed to work with different AI architectures. These differing frameworks and designs mean hardware must be able to support various models, like convolution neural networks, long short-term memory networks, state space models, and so on. Because these models are tuned to different architectures, most current AI chips allocate a large portion of their computing power to programmability.

Most large language models (LLMs) use matrix multiplication for the majority of their compute tasks and Etched estimated that Nvidia’s H100 GPUs only use 3.3% percent of their transistors for this key task. This means that the remaining 96.7% silicon is used for other tasks, which are still essential for general-purpose AI chips.

/cdn.vox-cdn.com/uploads/chorus_asset/file/25415561/STK418_Autonomous_Vehicles_Cvirginia_C.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25726112/2084566632.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25355895/STK022_ELON_MUSK_CVIRGINIA_C.jpg)